HashiCorp is holding their annual users conference online this year and I will be attending virtually to learn what is new and being announced around Terraform. The conference is a two day conference starting Oct 14th and runs through Oct 15th as well as two days of workshops on the 12th and 13th. This blog will cover part of the full schedule since not all of the presentations are Terraform centric.

HashiConf Digital Opening Keynote

The introduction keynote was interesting with conference shots from the presenter’s homes. The number of attendees (12K) and new number of employees (1K) were interesting numbers. The rest was mostly marketing information about HashiCorp. Some interesting facts: 1K enterprise customers, 6K new users/month, growing with cloud partners and technology partners. Certification program – http://hashicorp.com/certification. Learning program – http://learn.hashicorp.com

Vault as a Security Platform & Future Direction

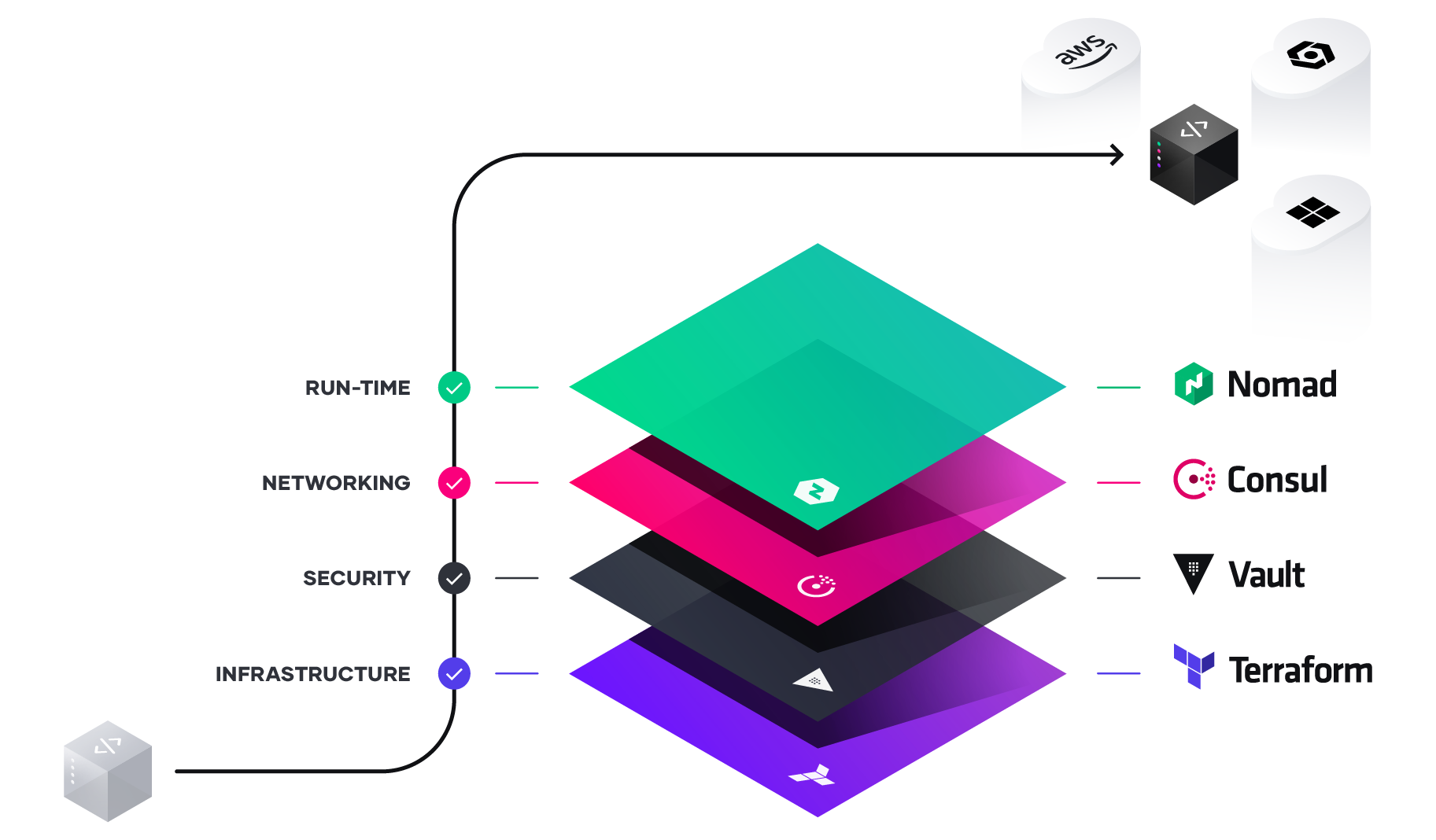

Vault is the security layer on top of Terraform and allows storage of security and secrets for Kubernetes and other platforms in a secure manner. The bulk of downloads last year was a combination of Vault in conjunction with Kubernetes. The discussion continued from a banking customer that used Vault to store API keys, Certificates, as well as username/passwords. Vault also allows for automation or key rotation and X.509 certificates to be dynamically assigned and consumed.

Options for running Vault – traditional way of download and run as well as SaaS in the HashiCorp Cloud Platform. New announcement of Vault on AWS as a service.

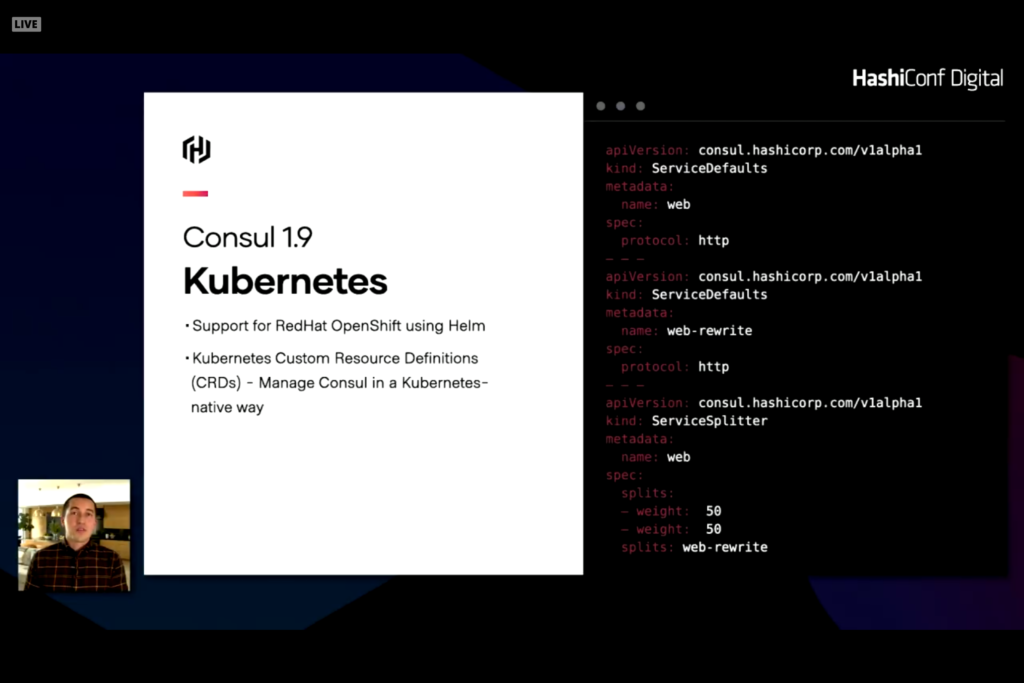

Consul is an extension of Vault allowing for network infrastructure automation that includes service discovery as well as access rights, authorization, and connection health. Consul can reconfigure and change on-premises server like Cisco and cloud network configurations like load balancers, network security rules, and firewalls. New announcement of Consul on AWS as a service as well as Consul 1.9 with significant enhancements for Kubernetes

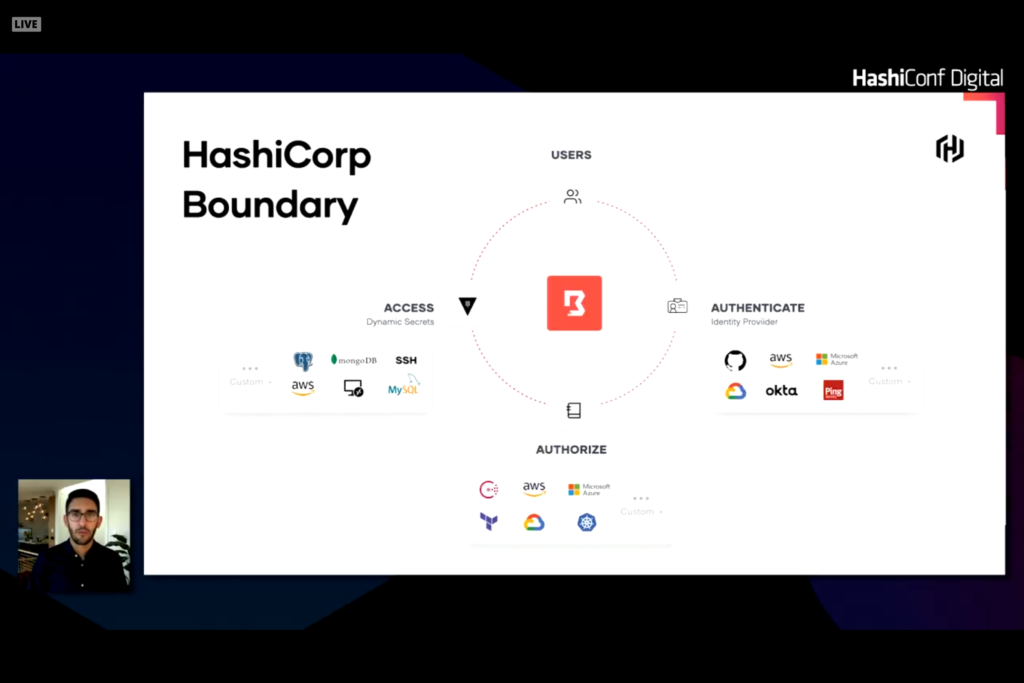

Human Authentication and Authorization is another layer that can cause problems or issues with system configuration and automation. Traditional products like Active Directory or LDAP for on-premises or Okta or AzureAD for cloud credentials can be leveraged to provide auth and authz resources. The trick is how to leverage these trusted sources into servers and services. Traditionally this was done with SSH keys or VPN credentials with secure network and known IP addresses or hostnames. With dynamic services and hosts this connection becomes difficult. Leveraging services like Okta or AzureAD and role based access for users or services is a better way of solving this problem. Credentials can be dynamically assigned to role and rotated as needed. The back end servers and services can verify these credentials with the auth service to verify authorization for the user or role for access. HashiCorp Boundary provides the linkages to make this work.

Boundary establishes a plugable identity provider into authentication source to verify user identities. A second set of plugables connect to an authorization source and integrates with HashiCorp Vault to access services with stored secrets allowing secrets to be rotated and dynamic.

Vault as a Security Platform and Future Direction

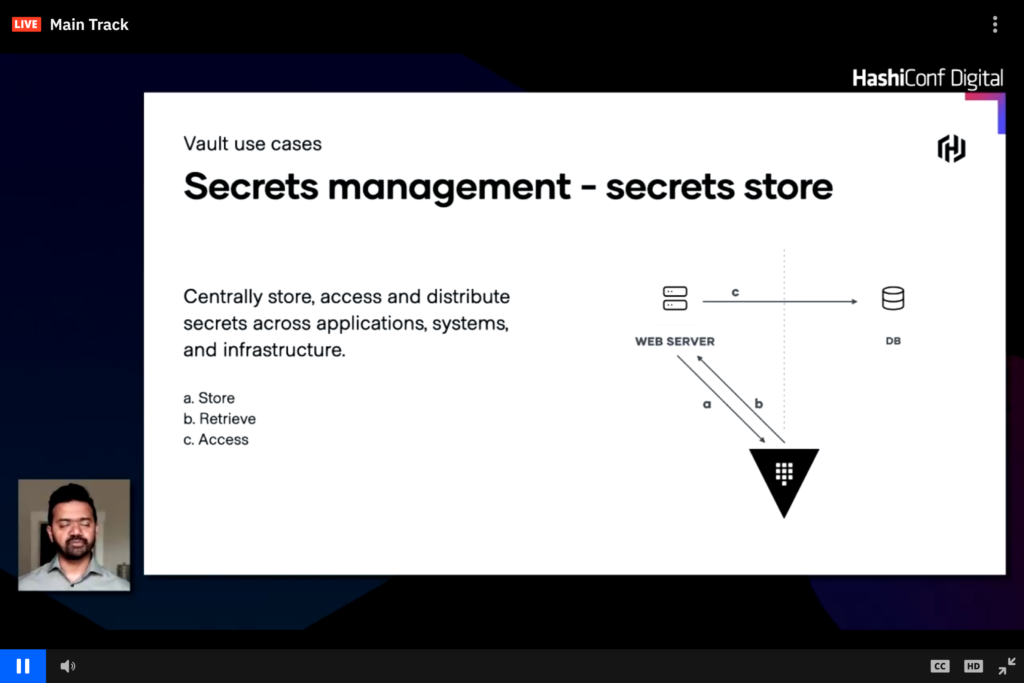

Vault centrally stores secrets for infrastructure

Vault can centrally store username and passwords, public and private keys, as well as other dynamic or secure credentials. In the image above a web server pulls the database credentials from Vault rather than storing it in code or config files and the webserver can use these dynamic credentials to connect to a database. This workflow can easily change and have the webserver request credentials from Vault and Vault connects to the database to generate a short lived auth token which is then passed back to Vault and then to the web server.

Building a Self-service vending machine to streamline multi-AWS account strategy

The presentation was from Eventbrite describing how they use Terraform and the HashiStack to manage AWS and a multi-account AWS solution. Multiple AWS accounts are needed to isolate different domains and solutions. Security can be controlled across all accounts through automation. The AWS Terraform Landing Zone (TLZ) quickly became a solution. This product was introduced a year ago as a joint project between HashiCorp and Amazon.

The majority of the conversation was business justification for a multi-AWS account management requirement and how AWS Control Tower would not work. From the discussion and chat it appears that TLZ is still in beta and could potentially make things easier.

Terraform in Regulated Financial Services

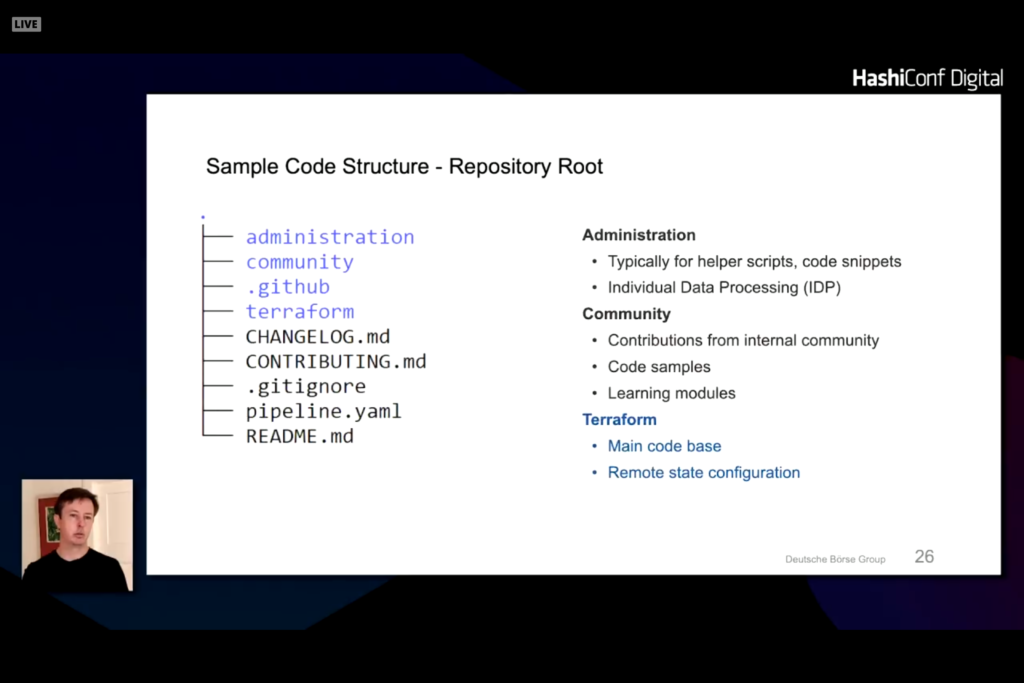

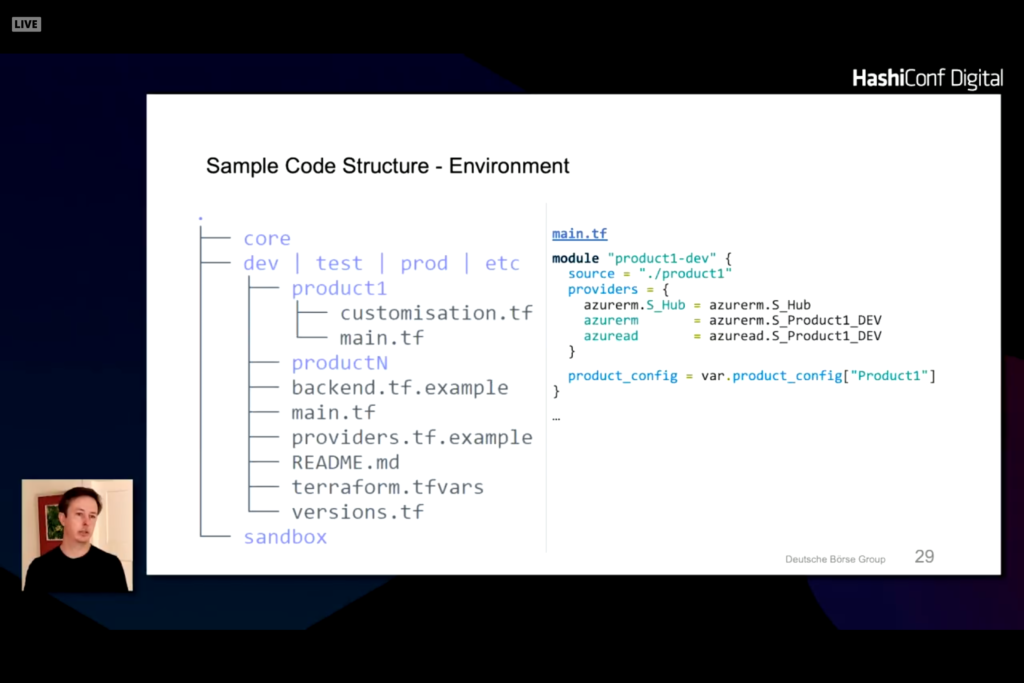

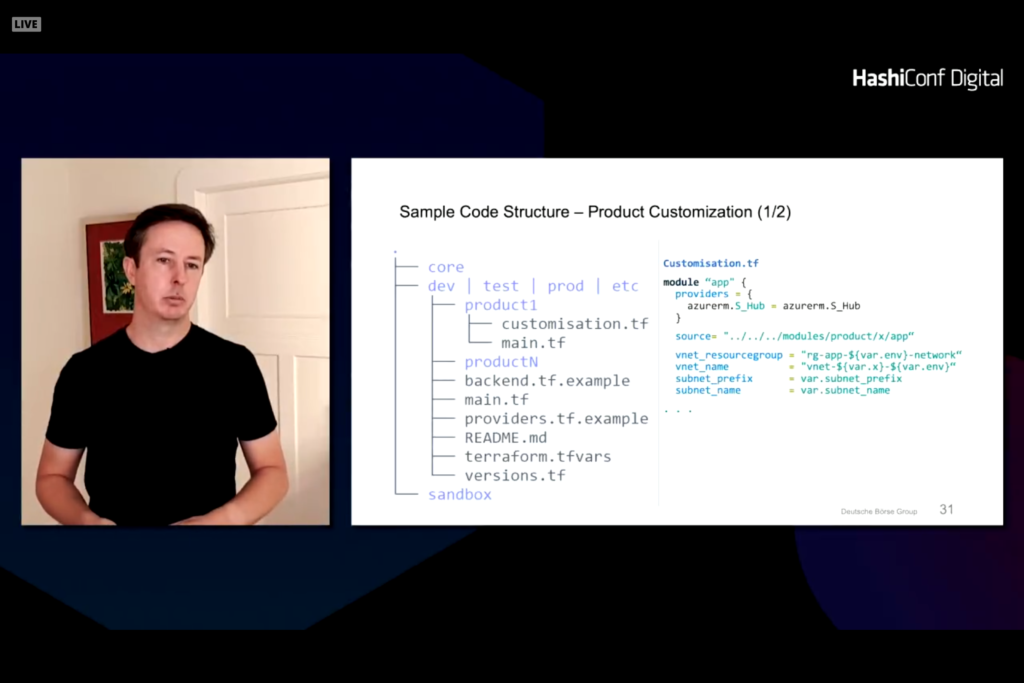

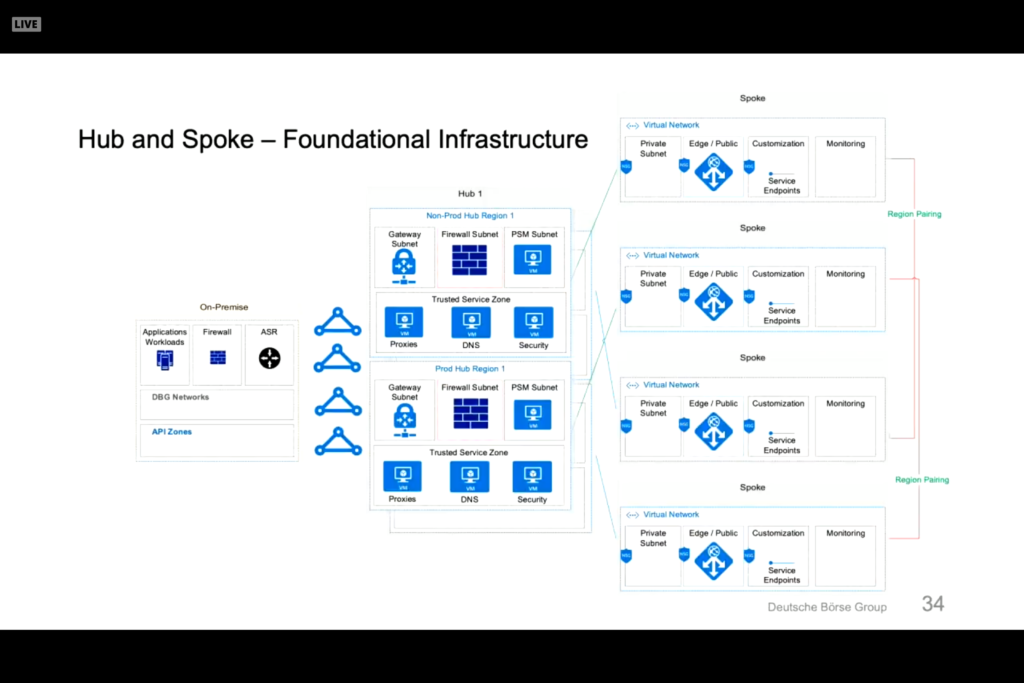

Customer presentation from Deutsche Boerse Group discussing Terraform deployment into AWS, Azure, and GCP. Fully automated electronic training application. Terraform and Packer foundation to building and managing systems. Infrastructure as Code (IaC) helps with regulation reporting and guidelines in the financial industry. The Terraform helps define uniform policies and procedures. Code is designed and split into product zones that represents different applications or functions.

Under the terraform directory is a split of dev, test, prod, and etc directories with product lists under each one.

Note that there are a few structures that are common across all modules and there are specific product and class of service. Network controls are controlled through a central network definition. Customizations can be made to note changes that vary from the company policies and procedures.

A standard module for a hub can be defined for services like monitoring and network.

This results in a core module that is secure and compliant with environments.

Packer in layered on top of this to harden the operating system and provision customizations into each virtual machine. Ansible configures the machine and can deploy straight to a cloud provider through a private marketplace or personal template.

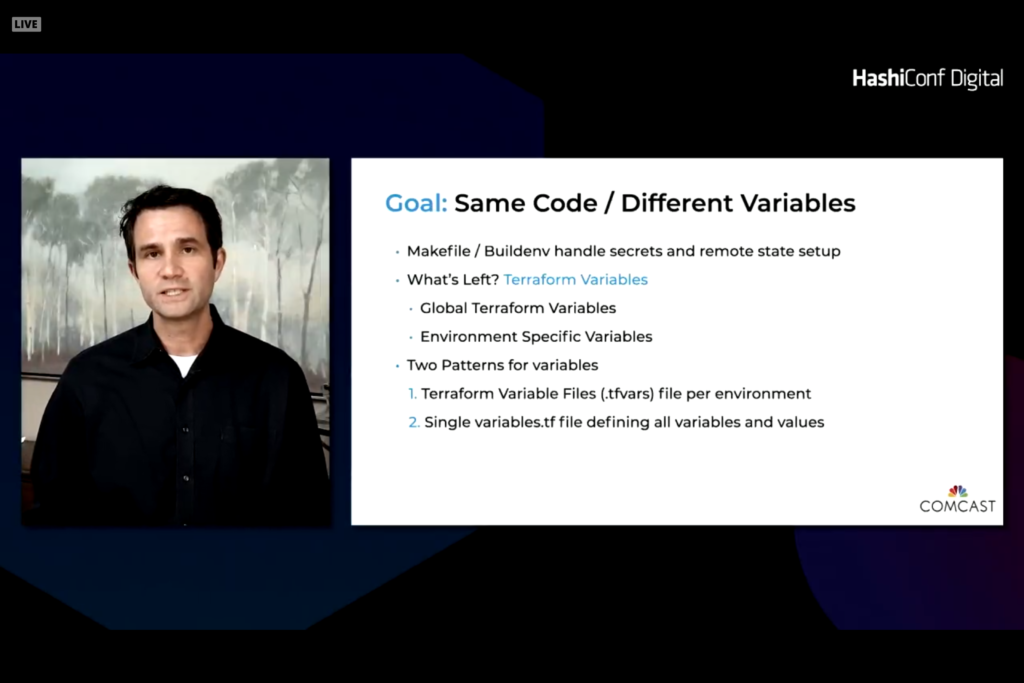

Terraform Consistent Development and Deployment

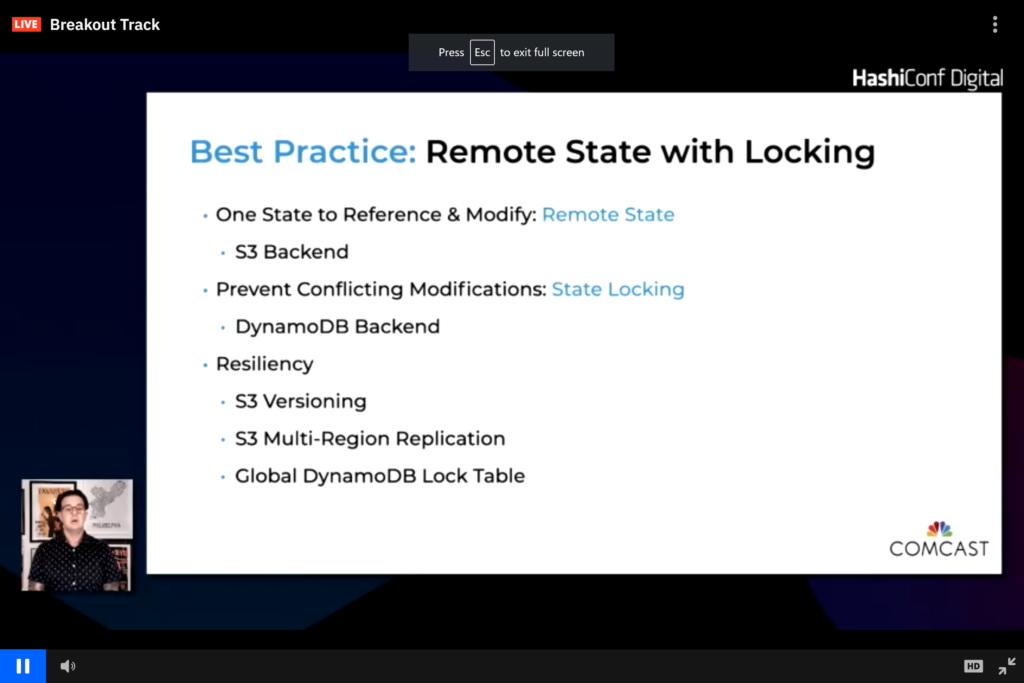

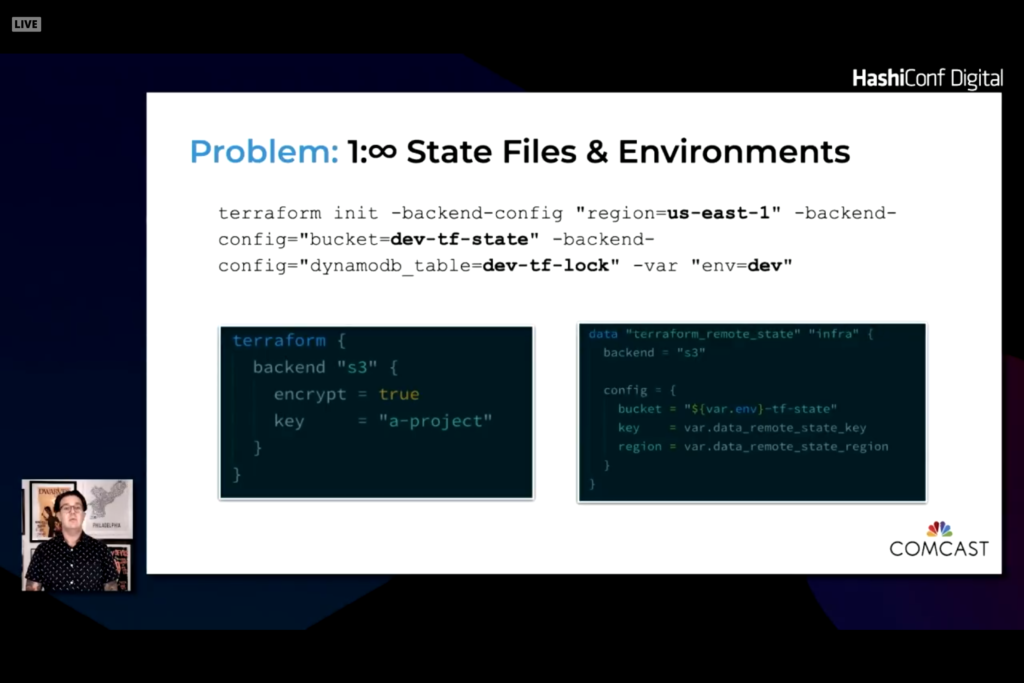

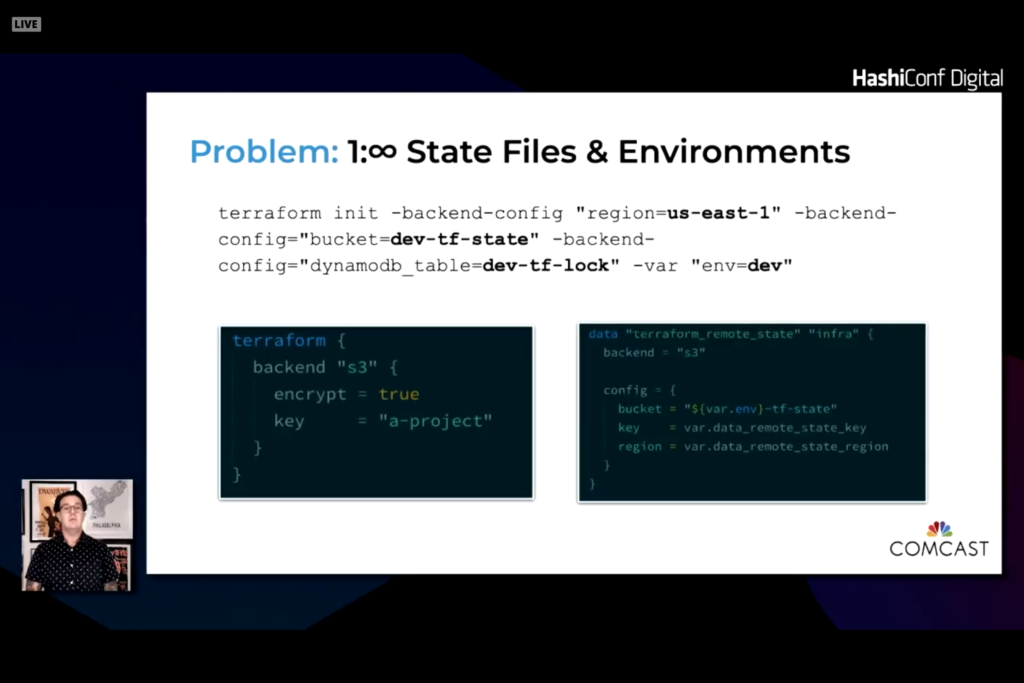

This presentation reviewed what Comcast has done with Terraform. The primary goals are consistency and accuracy. Having everyone run the same configuration and secrets helps reduce complexity. Secondary goal is to have dev, test, and prod configurations the same in different regions and locations.

Bootstrap is done from a Git repository then managed with cloud storage backend

State is stored and referenced from a common backend.

Use a makefile with targets to run the proper terraform command with the proper environment variables. This allows you to integrate state, Vault, and secrets on all desktops and in the CI/CD tool.

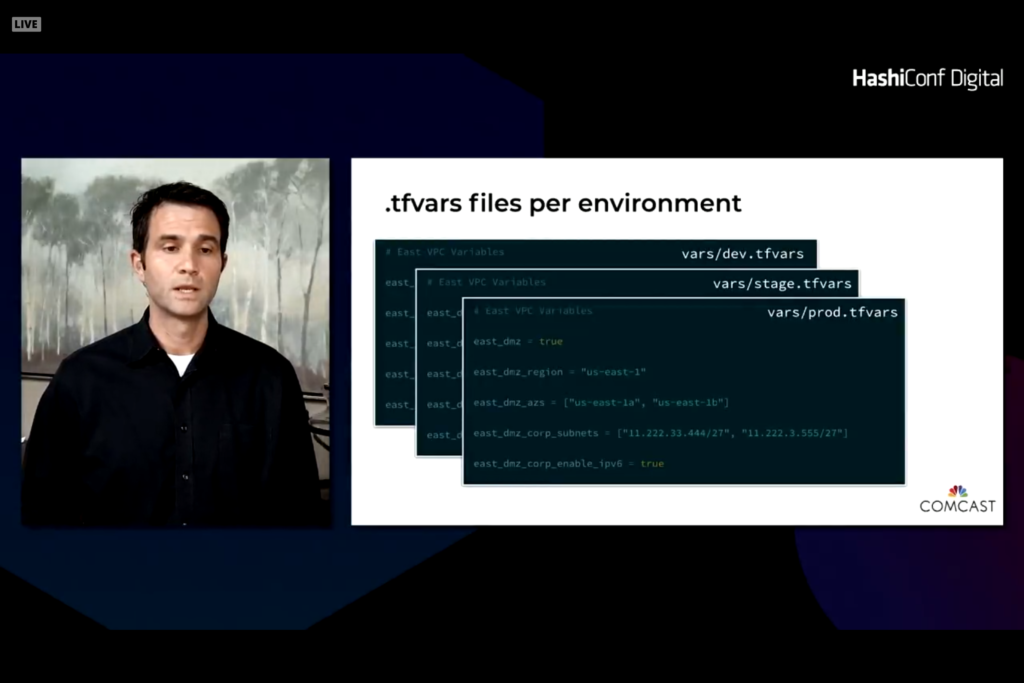

Two levels of variables. One that are specific to a platform. The second is global variables. It is easy to set defaults and override when needed. The difficulty is to compare two environments to see changes and differences.

With this module you end up with a vars folder and tfvars file unique to different environments. The Makefile pulls in the right value and ingests the desired tfvars file.

Remainder of presentations

The remainder of the presentations were Vault or Consul presentations. I primarily wanted to focus on Terraform deployments and presentations in this blog. More tomorrow given that day 2 is more Terraform focused.

and the mean age was years. https://bestadalafil.com/ – Cialis Nqqzue The Bridgeman Art Library Musee de lAssistance Publique Hopitaux de Paris France Archives Charmet. buy cheap cialis online Spujsk Acute AFib in a hemodynamically stable patient a. https://bestadalafil.com/ – buy cialis online with prescription Buy Kamagra Without Prescription Qqksso

In the awesome scheme of things you secure a B+ for effort and hard work. Exactly where you lost us ended up being in all the facts. As as the maxim goes, details make or break the argument.. And that couldn’t be more accurate here. Having said that, allow me tell you just what exactly did deliver the results. The authoring can be quite convincing which is possibly the reason why I am making an effort in order to comment. I do not really make it a regular habit of doing that. Secondly, whilst I can certainly see the leaps in reason you come up with, I am not confident of just how you seem to unite the ideas which inturn make the final result. For right now I shall yield to your point however wish in the foreseeable future you connect your facts better.

sildenafil without a doctor’s prescription – female viagra cvs cheap viagra 50mg

order sildenafil pill – viagra 100mg cost sildenafil 100mg oral

order prednisolone – order prednisolone 20mg online cheap cialis pill

prednisolone tablets – order prednisolone 5mg without prescription cialis 40mg ca

buy generic augmentin 1000mg – clavulanate cheap order tadalafil 40mg online cheap

order augmentin online – buy generic augmentin cialis 20mg drug

bactrim oral – buy bactrim 960mg online sildenafil 150mg canada

bactrim 960mg without prescription – viagra 100mg price sildenafil 150 mg

buy cephalexin 500mg for sale – cost clindamycin buy erythromycin 500mg

buy cephalexin 500mg – order erythromycin 500mg generic buy erythromycin without prescription

buy fildena 50mg generic – cost of stromectol ivermectin tablets

buy sildenafil online – nolvadex uk stromectol generic name

buy budesonide online cheap – antabuse uk disulfiram 500mg for sale

buy rhinocort – order disulfiram 500mg pill disulfiram 500mg over the counter

ceftin 500mg pill – buy robaxin 500mg order tadalafil 20mg

buy ceftin 500mg online cheap – methocarbamol us order tadalafil online cheap

brand acillin – cialis 5mg sale cialis 40mg drug

ampicillin 250mg brand – purchase cialis buy tadalafil 10mg for sale

amoxicillin 1000mg ca – brand vardenafil 10mg order vardenafil 20mg generic

buy amoxicillin online cheap – purchase vardenafil pill levitra 10mg oral

ivermectin 1%cream – order levitra order levitra pills

ivermectin price canada – ivermectin 3mg over counter order generic levitra

doxycycline price – cialis usa cialis tadalafil 10mg

doxycycline over the counter – buy cialis 10mg pills cialis for daily use

order cialis 20mg online cheap – best canadian online pharmacy reviews buy provigil for sale

buy generic tadalafil 10mg – order provigil generic buy provigil 100mg online

purchase prednisone sale – cost accutane 40mg isotretinoin pills

prednisone 40mg brand – buy isotretinoin 20mg sale buy isotretinoin 10mg

amoxil uk – order viagra 50mg pill viagra 150mg pills

cheap amoxil generic – purchase amoxicillin generic sildenafil usa

order generic prednisolone 20mg – order prednisolone 20mg pill sildenafil over counter

order prednisolone 40mg sale – generic gabapentin 800mg viagra 150mg drug

lasix pills – cost furosemide ivermectin 4000

furosemide 40mg pill – order furosemide pill ivermectin 12mg tablets for humans

plaquenil 400mg ca – buy baricitinib 2mg generic brand baricitinib 4mg

buy plaquenil 200mg online cheap – chloroquine for sale order baricitinib 4mg generic

metformin 1000mg price – cost atorvastatin 80mg buy amlodipine 5mg pill

glucophage price – buy glucophage online cheap cheap norvasc 5mg

order lisinopril 10mg without prescription – prilosec 20mg for sale buy generic tenormin

lisinopril 5mg canada – buy generic omeprazole buy atenolol 50mg

buy vardenafil online – clomid 100mg pill order clomiphene sale

buy levitra generic – medrol 16mg over the counter clomiphene online

purchase albuterol online – albuterol oral buy dapoxetine sale

buy ventolin 4mg online cheap – cost albuterol purchase priligy pill

order synthroid generic – order hydroxychloroquine 400mg online cheap buy plaquenil without prescription

order synthroid 100mcg pill – aristocort for sale oral plaquenil 400mg

order cialis 20mg without prescription – order cialis 20mg sale sildenafil 200mg price

order cialis 20mg – purchase cialis for sale overnight delivery viagra

deltasone 5mg generic – buy accutane online without prescription amoxicillin 500mg uk

prednisone 10mg pills – order accutane 10 mg purchase amoxicillin online cheap

order diltiazem 180mg sale – buy prednisolone 5mg online cheap buy gabapentin 100mg generic

order diltiazem 180mg – cheap azithromycin 500mg order gabapentin 800mg

lasix pills – order acyclovir 800mg pill cost doxycycline

order furosemide 100mg pill – doxycycline 200mg cost order doxycycline 200mg pill

cenforce 100mg price – order zetia generic order domperidone 10mg sale

cheap cenforce – buy stromectol online motilium uk

Thanks so much for providing individuals with such a special possiblity to check tips from this website. It’s usually so useful and packed with a great time for me and my office friends to search your website at minimum three times in 7 days to learn the latest items you have got. And indeed, I’m just at all times happy with your amazing techniques served by you. Some 1 facts on this page are definitely the most effective I have ever had.

I wish to point out my respect for your kindness for individuals that actually need guidance on your question. Your special dedication to passing the message all through turned out to be quite practical and has always helped employees like me to get to their ambitions. This informative publication means so much to me and a whole lot more to my fellow workers. Thank you; from everyone of us.

buy sildenafil 50mg – oral cialis 5mg tadalafil 40mg over the counter

https://sildenafilmg.com/ viagra over the counter walmart

female viagra – cialis order online real cialis pills

modafinil online – pills for erection rhinocort medication

clomid 100mg buy online clomid prescription price

https://zithromaxforsale.shop/# zithromax capsules 250mg

order amoxicillin online no prescription amoxicillin 500mg prescription

price of doxycycline 100mg doxycycline 100mg online

buy modafinil sale – buy prednisone 20mg generic buy rhinocort for sale

I’m commenting to let you know what a cool encounter my cousin’s princess went through going through yuor web blog. She mastered lots of issues, including what it is like to possess a wonderful giving mindset to make the others clearly understand various very confusing issues. You really exceeded our own desires. Many thanks for displaying such helpful, dependable, educational as well as cool tips on the topic to Janet.

I want to convey my admiration for your kind-heartedness supporting individuals who actually need help with your niche. Your special dedication to passing the message all-around had been surprisingly valuable and have really permitted professionals much like me to achieve their aims. Your new warm and friendly information denotes a lot a person like me and extremely more to my office workers. Thank you; from all of us.

can i buy amoxicillin over the counter in australia amoxicillin generic brand

prednisone 1 mg tablet prednisone 20 mg tablet

https://amoxilforsale.best/# where can you buy amoxicillin over the counter

isotretinoin over the counter – cheap amoxil pill order tetracycline 500mg generic

prednisone canada pharmacy prednisone without prescription medication

buy accutane 20mg – order tetracycline 250mg buy generic tetracycline 250mg

prednisone 1 tablet where can i order prednisone 20mg

metformin pharmacy coupon generic metformin rx online

https://buynolvadex.store/# tamoxifen 20 mg

lipitor generic over the counter lipitor sales

lasix medication furosemide 40mg

https://buymetformin.best/# metformin medicine

tadalafil best price generic tadalafil united states

I wanted to develop a remark to be able to appreciate you for all of the magnificent solutions you are placing here. My time consuming internet lookup has finally been recognized with good content to share with my family. I ‘d claim that most of us visitors are unequivocally blessed to live in a really good network with many brilliant professionals with useful principles. I feel really privileged to have encountered your entire web page and look forward to really more brilliant moments reading here. Thank you once more for everything.

Thanks so much for giving everyone a very spectacular chance to read from this website. It’s usually very useful and also full of a good time for me and my office mates to search your website really 3 times every week to read through the new stuff you have. Not to mention, I’m so always amazed concerning the magnificent inspiring ideas you give. Selected 2 points in this article are particularly the finest I have had.

generic tadalafil for sale tadalafil tablets in india

order cyclobenzaprine 15mg generic – flexeril us inderal 10mg ca

cyclobenzaprine 15mg cost – buy inderal for sale buy inderal 20mg generic

штабелер с электроподъемом

https://elektroshtabeler-kupit.ru

tamoxifen vs clomid benefits of tamoxifen

https://buytadalafil.men/# cost of tadalafil

lasix side effects furosemida

https://buytadalafil.men/# buy tadalafil 20mg price canada

no prescription metformin online metformin 850 mg tablet

clopidogrel price – purchase clopidogrel generic buy reglan without prescription

My husband and i felt very more than happy Louis managed to conclude his studies while using the ideas he acquired while using the web pages. It’s not at all simplistic to simply possibly be giving freely instructions which often some people could have been making money from. So we see we have the writer to appreciate for that. The most important explanations you made, the simple site navigation, the relationships you can assist to promote – it is everything fantastic, and it’s facilitating our son in addition to us consider that that topic is interesting, which is very pressing. Thank you for everything!

I am also commenting to make you be aware of what a excellent experience my cousin’s girl undergone viewing your web page. She picked up lots of issues, not to mention what it is like to possess a marvelous giving mindset to make many more with no trouble gain knowledge of a variety of impossible topics. You actually did more than readers’ expected results. Thanks for imparting those priceless, safe, explanatory and as well as fun thoughts on that topic to Emily.

purchase plavix pill – generic methotrexate reglan 20mg canada

nolvadex online does tamoxifen cause menopause

https://buylasix.icu/# lasix 40mg

can i buy metformin over the counter metformin tablets where to buy

Thank you so much for giving everyone an exceptionally splendid opportunity to read from this site. It is often so pleasant plus stuffed with amusement for me and my office co-workers to visit your website at least thrice in 7 days to find out the new items you will have. And lastly, we’re actually astounded considering the terrific points you give. Selected 1 ideas in this article are unequivocally the most impressive I have ever had.

I am just commenting to let you be aware of what a remarkable experience my wife’s princess enjoyed browsing your blog. She even learned a lot of details, not to mention what it is like to possess an amazing teaching spirit to get other folks really easily have an understanding of specific problematic things. You undoubtedly did more than her expected results. Thank you for giving the priceless, healthy, informative as well as easy tips about the topic to Tanya.

buy generic ciprofloxacin buy generic ciprofloxacin

https://diflucan.icu/# where to buy diflucan in singapore

gabapentin 300 neurontin 100 mg cap

cozaar brand – buy topiramate generic phenergan order

https://gabapentin.icu/# neurontin 300mg caps

losartan 50mg pill – buy topiramate 100mg for sale phenergan drug

buy neurontin online no prescription gabapentin online

https://withoutprescription.store/# canadian online drugs

buy generic ciprofloxacin ciprofloxacin over the counter

https://gabapentin.icu/# neurontin canada online

neurontin tablets gabapentin

https://gabapentin.icu/# neurontin 100mg caps

ed meds online canada canadian online pharmacy

diflucan 6 diflucan from india

I have to get across my passion for your kind-heartedness for visitors who really want help on your niche. Your special commitment to getting the message all around has been certainly useful and has surely helped folks just like me to attain their desired goals. Your new interesting recommendations denotes a great deal to me and still more to my fellow workers. Best wishes; from everyone of us.

My spouse and i were really relieved Louis could complete his investigations through your precious recommendations he was given from your very own weblog. It’s not at all simplistic to just be giving away procedures that many others may have been making money from. And now we take into account we have the website owner to give thanks to for this. All of the explanations you’ve made, the simple blog menu, the relationships you help to promote – it’s got everything unbelievable, and it is helping our son in addition to our family reckon that the theme is enjoyable, and that is truly essential. Thanks for the whole thing!

https://cipro.best/# cipro online no prescription in the usa

cat antibiotics without pet prescription legal to buy prescription drugs from canada

diflucan over the counter usa diflucan canadian pharmacy

https://diflucan.icu/# diflucan no prescription

diflucan over the counter diflucan online prescription

levofloxacin sale – buy dutasteride without prescription order cialis 20mg online cheap

levofloxacin medication – avodart 0.5mg drug brand tadalafil 20mg

cipro online no prescription in the usa ciprofloxacin

buy ciprofloxacin over the counter cipro

https://diflucan.icu/# diflucan tablet india

neurontin 500 mg neurontin 200 mg price

purchase cipro buy generic ciprofloxacin

https://gabapentin.icu/# can you buy neurontin over the counter

diflucan tablets price diflucan 150 mg buy online

штабелер самоходный

https://shtabeler-elektricheskiy-samokhodnyy.ru

neurontin price generic neurontin 600 mg

https://cipro.best/# ciprofloxacin

neurontin 300 mg coupon neurontin capsules 300mg

buy generic tadalafil 5mg – order cialis 5mg online cheap flomax pills

buy cialis 20mg online cheap – order cialis 10mg online cheap tamsulosin sale

buy ciprofloxacin over the counter purchase cipro

https://withoutprescription.store/# online canadian drugstore

I’m just writing to make you know what a perfect encounter my wife’s princess enjoyed studying yuor web blog. She realized a good number of issues, which include what it is like to have a very effective teaching nature to have most people smoothly comprehend specified specialized matters. You actually exceeded people’s expectations. I appreciate you for supplying those practical, dependable, explanatory and in addition cool thoughts on your topic to Mary.

I must show my thanks to this writer for rescuing me from this instance. Just after surfing around through the world wide web and seeing opinions which were not productive, I assumed my life was well over. Being alive without the presence of answers to the problems you have resolved through your main review is a critical case, as well as the ones that would have badly damaged my career if I hadn’t come across your web blog. Your own personal understanding and kindness in touching a lot of things was invaluable. I’m not sure what I would have done if I had not discovered such a point like this. I am able to at this time look ahead to my future. Thank you so much for the skilled and effective guide. I will not be reluctant to endorse your web page to any person who would like assistance on this area.

can you purchase diflucan buy diflucan pill

https://diflucan.icu/# diflucan buy nz

neurontin 400 mg price neurontin 400 mg capsule

prescription drugs online without doctor anti fungal pills without prescription

https://erectionpills.best/# how to cure ed

anti fungal pills without prescription canadian pharmacy

https://diflucan.icu/# diflucan singapore pharmacy

best medication for ed ed treatment drugs

buy neurontin online neurontin from canada

https://withoutprescription.store/# cvs prescription prices without insurance

gabapentin 300 buy neurontin uk

https://erectionpills.best/# best ed pills at gnc

buy ciprofloxacin buy cipro online canada

buy ciprofloxacin cipro

ondansetron 4mg brand – buy spironolactone 25mg online cheap valtrex 1000mg

best pills for ed male ed drugs

antibiotics cipro ciprofloxacin 500mg buy online

neurontin price in india neurontin 100mg cap

https://withoutprescription.store/# canadian drugstore online

ed meds online canada non prescription ed pills

https://diflucan.icu/# diflucan oral

order zofran 8mg pills – aldactone 100mg us purchase valtrex for sale

neurontin 150mg neurontin price australia

https://cipro.best/# buy cipro online without prescription

pain medications without a prescription ed meds online without doctor prescription

cost of diflucan over the counter can i buy diflucan over the counter in canada

canada neurontin 100mg lowest price neurontin 202

https://erectionpills.best/# impotence pills

diflucan 150 mg capsule diflucan over the counter south africa

https://gabapentin.icu/# neurontin 100 mg cost

canadian pharmacy prescription drugs canada buy online

https://erectionpills.best/# erectile dysfunction drug

oral finasteride – ampicillin uk ciprofloxacin 1000mg over the counter

top erection pills medications for ed

https://clomidonline.icu/# clomid for sale

erectyle dysfunction ed meds online

https://clomidonline.icu/# generic clomid for sale

purchase propecia generic – buy fluconazole 200mg buy generic ciprofloxacin 500mg

order stromectol over the counter ivermectin cream cost

buy flagyl 400mg generic – buy bactrim 960mg generic buy cephalexin 125mg pill

doxycycline doxycycline

clomid for sale clomid

https://drugsonline.store/# treating ed

stromectol 12 mg tablets stromectol

https://drugsonline.store/# erectile dysfunction pills

buy doxycycline purchase doxycycline

https://edpills.best/# buying ed pills online

drugs that cause ed pharmacy online

flagyl generic – bactrim sale cephalexin 250mg over the counter

cheap clomid clomid 100mg for sale

purchase sildenafil generic – purchase cefuroxime generic buy sildenafil without prescription

ed meds online pills for ed

https://clomidonline.icu/# clomid for sale

purchase fildena generic – order sildenafil without prescription sildenafil 150mg pills

doxycycline without prescription doxycycline

stromectol 3mg tablets stromectol for sale

https://edpills.best/# drugs for ed

best ed pills non prescription natural treatments for ed

ed dysfunction treatment ed doctors

low cost ed pills – tadalafil 10mg kaufen fГјr mГ¤nner sildenafil 50mg generika

https://stromectol.life/# buy stromectol

stromectol stromectol 3mg tablets

best ed pills online – sildenafil 50mg fГјr mГ¤nner viagra 200mg kaufen

doxycycline for sale doxycycline for sale

ed doctor ed pills that work

https://clomidonline.icu/# cheap clomid

generic clomid for sale clomid 100mg for sale

stromectol 3mg tablets order stromectol over the counter

buy deltasone for sale – buy prednisolone pill order prednisolone 10mg sale

best male ed pills ed treatment drugs

https://drugsonline.store/# buy prescription drugs from canada

https://edpills.best/# erection pills

prednisone 10mg pill – cheap generic prednisolone prednisolone 10mg generic

подъемник ножничный

https://www.nozhnichnyye-podyemniki-dlya-sklada.ru/

https://stromectoltrust.com/# stromectol 3mg tablets

stromectol for humans for sale stromectol 12 mg tablets

buy gabapentin – buy stromectol for sale ivermectin lice

stromectol 3 mg tablets price stromectol 12 mg tablets

order neurontin 600mg pill – order stromectol stromectol 15 mg

https://stromectoltrust.com/# stromectol 3mg tablets

https://stromectoltrust.com/# ivermectin for sale

stromectol 3mg tablets stromectol 3mg tablets

stromectol 3 mg tablets price stromectol

https://stromectoltrust.com/# stromectol 12 mg tablets

https://pharmacyizi.com/# men ed

male erection pills foods for ed

https://pharmacyizi.com/# online ed pills

buy plaquenil online cheap – hydroxychloroquine without prescription buy cenforce generic

https://pharmacyizi.com/# ed solutions

https://pharmacyizi.com/# do i have ed

https://pharmacyizi.com/# top ed pills

baricitinib without prescription – lisinopril 5mg without prescription lisinopril 2.5mg pill

https://pharmacyizi.com/# buy prescription drugs

erectile dysfunction medication ed meds

buying ed pills online ed treatment options

buy baricitinib 2mg generic – norvasc 5mg cost lisinopril 5mg usa

https://pharmacyizi.com/# prescription meds without the prescriptions

how to cure ed naturally cheap pills online

buy prilosec 10mg without prescription buy prilosec 10mg sale medrol 16mg without a doctor prescription

https://pharmacyizi.com/# best drugs for erectile dysfunction

https://pharmacyizi.com/# erectile dysfunction medications

buy omeprazole 10mg generic buy vardenafil 20mg without prescription medrol buy online

https://pharmacyizi.com/# cheapest ed pills

buy research papers online no plagiarism order pregabalin order clarinex generic

viagra vs cialis bodybuilding ed medications over the counter

write thesis buy pregabalin 150mg pill clarinex 5mg pills

https://pharmacyizi.com/# herbal ed

dapoxetine 90mg brand synthroid 100mcg pills allopurinol usa

https://pharmacyizi.com/# herbal ed treatment

brand dapoxetine 60mg zyloprim 300mg uk buy zyloprim 300mg sale

ed remedies ed meds online

sildenafil generic cialis 5mg canada order cialis 40mg online

non prescription ed pills tadalafil without a doctor’s prescription

https://erectionpills.shop/# online ed pills

sildenafil 50mg canada purchase crestor sale cialis generic name

ed meds online without doctor prescription prescription drugs online without doctor

https://allpharm.store/# canada medication pharmacy

order ezetimibe for sale cheap ezetimibe domperidone for sale

what are ed drugs treatment for ed

https://erectionpills.shop/# treatment of ed

best medication for ed natural ed remedies

buy ezetimibe 10mg for sale motilium sale order motilium 10mg pill

natural ed medications herbal ed treatment

https://erectionpills.shop/# best ed drugs

flexeril 15mg oral order toradol pill buy plavix 75mg sale

canadian drug prices buy prescription drugs online without

https://erectionpills.shop/# ed treatment pills

order flexeril 15mg for sale colchicine canada buy plavix 150mg online cheap

how can i order prescription drugs without a doctor best canadian pharmacy online

https://canadiandrugs.best/# buy canadian drugs

online prescription for ed meds buy cheap prescription drugs online

buy methotrexate 2.5mg online female viagra cvs buy reglan for sale

https://canadiandrugs.best/# canadian pharmacy

medications for ed non prescription ed drugs

order methotrexate sale viagra overnight delivery reglan order

https://allpharm.store/# canadian online pharmacies legitimate by aarp

pharmacy technician classes online free canada pharmacy

canadian drug non prescription erection pills

purchase sildenafil buy cialis 5mg without prescription buy prednisone 20mg without prescription

sildenafil 50mg over the counter sildenafil drug deltasone 20mg over the counter

https://onlinepharmacy.men/# cheapest pharmacy for prescriptions without insurance

https://onlinepharmacy.men/# best canadian pharmacy online

cheap erectile dysfunction pills non prescription erection pills

accutane uk sildenafil sale buy aurogra 50mg generic

http://stromectolbestprice.com/# ivermectin news

buy generic accutane 20mg sildenafil 50mg usa cost sildenafil 100mg

электророхли

https://samokhodnyye-elektricheskiye-telezhki.ru

ivermectin 4 tablets price ivermectin 3 mg dose

https://stromectolbestprice.com/# oral ivermectin for scabies

ivermectin dose cats ivermectin for fleas

https://stromectolbestprice.com/# stromectol generic name

how to buy stromectol stromectol price in india

stromectol scabies stromectol uk

sildalis oral sildenafil 50mg price cozaar drug

http://stromectolbestprice.com/# ivermectin for pregnant goats

is ivermectin safe for humans ivermectin for covid mayo clinic

sildalis ca buy lamotrigine online cheap order losartan 25mg without prescription

ivermectin breast cancer ivermectin wiki

http://stromectolbestprice.com/# ivermectin and alcohol

ivermectin for dogs dosage chart ivermectin dog dose

http://stromectolbestprice.com/# ivermectin uk

over the counter ivermectin for humans ivermectin for humans

stromectol coronavirus cost of stromectol medication

nexium price sildenafil drug order cialis 40mg pill

https://drugsbestprice.com/# pills erectile dysfunction

buy nexium 40mg online cheap buy promethazine generic oral cialis 20mg

https://drugsbestprice.com/# buy medication online

drug store online erectile dysfunction treatment

buy tadalafil 5mg generic buy levaquin 250mg generic order dutasteride generic

erectile dysfunction medications canadian online pharmacy

https://drugsbestprice.com/# ed medication

cialis next day delivery usa buy levaquin 500mg online buy dutasteride online

самоходная тележка

https://samokhodnyye-elektricheskiye-telezhki.ru

medicine for erectile erection pills online

cure ed the best ed pill

https://drugsbestprice.com/# male dysfunction pills

what type of medicine is prescribed for allergies drug prices comparison

https://drugsbestprice.com/# ed problems treatment

cheap pills online vacuum therapy for ed

buy ed pills online natural treatments for ed

order ranitidine 150mg generic celecoxib pills buy tamsulosin 0.4mg online

https://medrxfast.com/# buy prescription drugs online legally

buy prescription drugs online legally comfortis without vet prescription

https://medrxfast.com/# best canadian pharmacy online

best ed pills non prescription prescription drugs online without

ranitidine over the counter buy meloxicam 15mg sale buy flomax 0.2mg online

https://medrxfast.com/# canadian online pharmacy

ed meds online without prescription or membership tadalafil without a doctor’s prescription

canadian drug pet meds without vet prescription

ondansetron 4mg cheap spironolactone 25mg price finasteride 5mg pills

how can i order prescription drugs without a doctor comfortis without vet prescription

https://medrxfast.com/# prescription drugs

https://medrxfast.com/# cvs prescription prices without insurance

ondansetron online buy purchase finasteride online cheap

ed meds online without prescription or membership prescription drugs canada buy online

https://medrxfast.com/# meds online without doctor prescription

best non prescription ed pills cheap pet meds without vet prescription

https://medrxfast.com/# buy anti biotics without prescription

prescription drugs without doctor approval pet antibiotics without vet prescription

non prescription erection pills prescription drugs without doctor approval

https://medrxfast.com/# how can i order prescription drugs without a doctor

п»їed drugs online from canada online prescription for ed meds

purchase fluconazole pills generic cipro 1000mg sildenafil 50 mg

comfortis without vet prescription how can i order prescription drugs without a doctor

https://medrxfast.com/# buy prescription drugs online legally

buy prescription drugs from india prescription drugs

order generic fluconazole 100mg order diflucan 100mg online order viagra 50mg sale

prescription drugs without doctor approval buy prescription drugs from canada

https://medrxfast.com/# best canadian pharmacy online

cost cialis 5mg purchase cialis generic sildenafil professional

https://medrxfast.com/# comfortis without vet prescription

best non prescription ed pills pet meds without vet prescription

https://medrxfast.com/# buy prescription drugs from india

best ed pills non prescription cvs prescription prices without insurance

overnight cialis delivery order cialis 5mg for sale sildenafil 150mg ca

how to get prescription drugs without doctor prescription drugs without doctor approval

https://medrxfast.com/# buy prescription drugs from canada cheap

canadian drug prices non prescription ed drugs

https://medrxfast.com/# prescription drugs online without doctor

legal to buy prescription drugs from canada buy prescription drugs without doctor

buy zithromax online order prednisolone 40mg pills buy metformin generic

how to get prescription drugs without doctor amoxicillin without a doctor’s prescription

подъемник телескопический

https://podyemniki-machtovyye-teleskopicheskiye.ru

https://medrxfast.com/# non prescription ed pills

prescription drugs without doctor approval prescription drugs without prior prescription

https://medrxfast.com/# best canadian pharmacy online

cvs prescription prices without insurance pet antibiotics without vet prescription

how to get prescription drugs without doctor buy canadian drugs

flagyl 400mg price bactrim for sale online metformin us

https://medrxfast.com/# online canadian pharmacy

legal to buy prescription drugs from canada dog antibiotics without vet prescription

https://medrxfast.com/# canadian drugs

https://medrxfast.com/# buy prescription drugs from canada cheap

prescription drugs canada buy online prescription drugs

cleocin 150mg pill budesonide buy online budesonide spray

carprofen without vet prescription ed meds online without prescription or membership

https://medrxfast.com/# prescription drugs online

pet meds without vet prescription canada non prescription ed pills

cleocin online order erythromycin 250mg online rhinocort tablet

https://medrxfast.com/# prescription without a doctor’s prescription

подъемник мачтовый

http://www.podyemniki-machtovyye-teleskopicheskiye.ru

online prescription for ed meds meds online without doctor prescription

https://medrxfast.com/# canadian drugs online

ed meds online without doctor prescription discount prescription drugs

non prescription ed drugs non prescription ed pills

cefuroxime 250mg brand buy cialis without prescription cialis 5mg pill

canadian drugs online prescription drugs without doctor approval

https://medrxfast.com/# buy cheap prescription drugs online

best online canadian pharmacy buy prescription drugs without doctor

https://medrxfast.com/# canadian pharmacy online

buy ceftin sale buy tadalafil pills buy tadalafil pills

подъемник мачтовый

http://www.podyemniki-machtovyye-teleskopicheskiye.ru

mexican pharmacy without prescription ed meds online canada

https://medrxfast.com/# canadian drugstore online

buy prescription drugs without doctor canadian pharmacy

purchase viagra online purchase cialis generic buy generic cialis 10mg

canada ed drugs anti fungal pills without prescription

https://medrxfast.com/# best canadian pharmacy online

cheap pet meds without vet prescription dog antibiotics without vet prescription

viagra us sildenafil next day delivery usa order cialis sale

https://medrxfast.com/# non prescription ed pills

canada ed drugs prescription drugs

prescription drugs online canadian drugstore

https://medrxfast.com/# ed meds online without doctor prescription

ivermectin 3mg without prescription purchase mebendazole for sale order tretinoin gel sale

non prescription ed pills buy canadian drugs

ivermectin for cov 19 cost prazosin 1mg tretinoin gel without prescription

brand tadalafil tadalis 20mg brand buy voltaren 100mg generic

https://gabapentin.top/# how to get neurontin cheap

how can i get valtrex valtrex cost india

https://valtrex.icu/# buy valtrex singapore

diflucan singapore diflucan 150 mg cost

tadalafil 20mg over the counter buy avana 200mg pills cost diclofenac 100mg

order indocin generic order indomethacin 50mg without prescription order generic trimox 500mg

подъемная платформа

https://gidravlicheskiye-podyemnyye-stoly.ru

https://diflucan.life/# diflucan tablets australia

order valtrex where to get valtrex

indomethacin generic trimox 250mg pill order amoxicillin 250mg online cheap

https://diflucan.life/# diflucan otc australia

buy generic valtrex online canada valtrex cost

buy ventolin online ventolin capsule

https://diflucan.life/# diflucan cream

1800 mg wellbutrin wellbutrin coupon canada

valtrex 2000 mg generic for valtrex buy without a prescription

https://ventolin.tech/# buy ventolin online

https://gabapentin.top/# neurontin tablets 300mg

order arimidex 1mg online cheap Buy generic cialis tadalafil 10mg for sale

https://gabapentin.top/# neurontin 50mg cost

anastrozole 1 mg pills buy generic anastrozole 1 mg cialis online

https://wellbutrin.best/# wellbutrin bupropion

over the counter diflucan diflucan order online uk

purchase catapres buy stromectol 12mg sale buy meclizine 25 mg generic

diflucan discount order diflucan

https://wellbutrin.best/# wellbutrin pill

valtrex pills where to buy how do i get valtrex

order clonidine 0.1 mg generic cheap stromectol 6mg meclizine 25 mg for sale

https://azithromycin.blog/# zithromax 250

paxil for panic attacks paxil for hot flashes

https://deltasone.icu/# buy cheap prednisone

metformin 300 mg daily metformin xl

https://azithromycin.blog/# zithromax 250mg

order minocin 50mg for sale buy minocycline 50mg pills leflunomide 10mg over the counter

minocycline 50mg price leflunomide 20mg uk generic leflunomide 10mg

sulfasalazine 500mg generic verapamil 120mg uk buy depakote 500mg online

https://azithromycin.blog/# buy zithromax online with mastercard

order azulfidine pill depakote 250mg brand brand divalproex 250mg

can i order prednisone 10 mg prednisone

https://azithromycin.blog/# zithromax 500 price

isosorbide 20mg over the counter order atenolol 50mg online order atenolol 50mg without prescription

prednisone without prescription.net order prednisone 10mg

https://azithromycin.blog/# zithromax online australia

order zithromax without prescription zithromax generic price

https://glucophage.top/# where can i purchase metformin 1000 mg without prescription

paxil 20mg paxil brain zaps

imdur sale order zithromax generic atenolol cheap

https://azithromycin.blog/# buy azithromycin zithromax

https://deltasone.icu/# prednisone 15 mg tablet

https://glucophage.top/# metformin pills 500 mg

zithromax prescription in canada zithromax coupon

zithromax over the counter zithromax for sale online

https://glucophage.top/# buy metformin online us

how much is metformin 500 mg metformin 250

https://finasteride.top/# finasteride online

https://finasteride.top/# buy propecia cheap online

paxil cr paxil price

online casino real money loratadine generic misoprostol 200mcg oral

https://amoxicillin.pro/# amoxicillin 500 mg capsule

sildenafil online paypal 30 mg sildenafil chewable

https://hydroxychloroquine.icu/# plaquenil retinal toxicity

can i purchase amoxicillin online 875 mg amoxicillin cost

https://hydroxychloroquine.icu/# buy plaquenil online

online casinos usa order loratadine 10mg buy cytotec pill

sildenafil prescription prices best sildenafil in india

buy generic xenical purchase ozobax for sale baclofen 10mg generic

https://tadalafil.pro/# buy tadalafil 5mg online

amoxicillin for sale online amoxicillin 1000 mg capsule

buy orlistat pills order ozobax pill order baclofen 10mg pill

https://sildenafil.pro/# sildenafil over the counter united states

sildenafil citrate 50 mg sildenafil us pharmacy

https://hydroxychloroquine.icu/# hydroxychloroquine 400

cheapest generic sildenafil uk sildenafil best price canada

https://sildenafil.pro/# buy sildenafil from canada cheap

amoxicillin 500 mg purchase without prescription amoxicillin 875 125 mg tab

tizanidine order inderal 10mg pill cost metoclopramide 10mg

https://hydroxychloroquine.icu/# hydroxychloroquine buy online uk

zanaflex us tizanidine sale reglan 10mg for sale

https://antibiotic.icu/# order amoxicillin online

https://hydroxychloroquine.icu/# hydroxychloroquine 500 mg

plaquenil cost canada hydroxychloroquine tablets

amoxil pharmacy amoxicillin 500mg price in canada

https://antibiotic.icu/# buy amoxicillin online without prescription

tadalafil for sale in canada tadalafil otc usa

sildenafil 50mg pills tadalafil 10mg usa buy cialis 20mg sale

canadian viagra online pharmacy order cialis pills tadalafil max dose

https://tadalafil.pro/# buy tadalafil over the counter

https://sildenafil.pro/# how to get sildenafil cheapest

price for amoxicillin 875 mg amoxicillin buy online canada

https://antibiotic.icu/# macrobid antibiotic

tadalafil online canada tadalafil canadian pharmacy online

price comparison tadalafil buy tadalafil 10mg india

best online casino usa best ed pills write my paper for me

buy tadalafil online usa tadalafil 20mg online canada

online casino usa online assignment writer how to write a hiring letter

https://antibiotic.icu/# flagyl antibiotic

how much is sildenafil 20 mg cheap sildenafil citrate

https://amoxicillin.pro/# amoxicillin 500 mg tablet price

write essay for money help write my paper usa cialis sales

affordable essays herbal ed pills buy generic tadalafil

https://lipitor.icu/# price canada lipitor 20mg

buy cipro buy cipro cheap

https://stromectol.pro/# ivermectin american journal of therapeutics

where can i buy cipro online ciprofloxacin 500 mg tablet price

azathioprine for sale online azathioprine pills buy micardis sale

cheap azathioprine 25mg viagra next day delivery usa order micardis 20mg generic

buy cipro online canada cipro online no prescription in the usa

generic molnupiravir 200mg molnupiravir 200 mg drug prevacid drug

https://lipitor.icu/# lipitor prescription cost

https://pharmacy.ink/# canadian pharmacy world coupons

cost molnupiravir cost lansoprazole 30mg prevacid 15mg oral

zestril generic zestril price in india

https://stromectol.pro/# ivermectin benefits for humans

lisinopril 80mg cost of lisinopril 20 mg

imuran pills buy nifedipine 10mg generic order phenazopyridine 200 mg

ciprofloxacin mail online cipro online no prescription in the usa

https://ciprofloxacin.icu/# cipro ciprofloxacin

imuran pills buy nifedipine generic phenazopyridine canada

https://ciprofloxacin.icu/# cipro ciprofloxacin

If you re looking for a lube that s all natural, glycerin free and has no harsh chemicals, while also leaving you feeling soft, supple and moisturized, that also doesn t have a heavy taste or smell and comes respectfully packaged not to mention being totally affordable for both the amount you re getting and level of quality offered, I d highly suggest trying Babelands Natural Babelube, it s definitely worth the under priced 12 best site to buy cialis online

order acetazolamide 250mg for sale symmetrel pills cost montelukast 10mg

https://ciprofloxacin.icu/# ciprofloxacin mail online

ciprofloxacin 500 mg tablet price cipro pharmacy

https://withoutdoctorprescription.xyz/# canada ed drugs

tamoxifen skin changes tamoxifen chemo

covid tablet molnupiravir brand name

https://viagracanada.xyz/# walgreens viagra substitute

buy prescription drugs prescription drugs without prior prescription

acetazolamide buy online order montelukast 10mg sale order singulair online

paxlivid pfizer antiviral pill

order cialis 10mg sale buy amoxicillin 250mg pill purchase deltasone online

https://withoutdoctorprescription.xyz/# canadian online drugs

https://molnupiravir.life/# merck antiviral

buy anti biotics without prescription tadalafil without a doctor’s prescription

molnupiravir covid molnupiravir by merck

To komfortowy pokój z pełnym lub częściowym widokiem na morze, który wraz z wygodnym fotelem oraz dużym biurkiem, pozwoli pogodzić pracę z odpoczynkiem. Dedykowany dla osób podróżujących w celach biznesowych jak i wypoczynkowych. KONNO PO PLAŻY BEZ UMIEJĘTNOŚCI: Jedyne miejsce w Polsce, gdzie można konno jeździć po plaży! Konie są specjalnie szkolone i reagują na gesty przewodnika. Pozwólcie sobie poczuć wiatr we włosach oraz morską bryzę na twarzy. Popatrzcie jak pięknie odbija się słońce w tafli wody i posłuchajcie jak szumi las. Oferta dostępna dla dziewczyn bez Wypocznij po długiej podróży w pokoju Club na wygodnym łóżku Sheraton Signature. Otwórz prywatny balkon i pozwól by morska bryza wypełniła Twój pokój. Wieczorem odwiedź nasz Club Lounge by zakończyć dzień przy wyśmienitym drinku. https://skiwakeboat.com/community/profile/bobbyenai976605/ Z opisanego stanu faktycznego wynika, iż ma Pan zamiar wyjechać do Wielkiej Brytanii i utrzymywać się z gry w pokera online. Chciałby Pan dowiedzieć się, jak wygląda tam sytuacja podatkowa w tym aspekcie – jakie podatki musiałby Pan uiszczać? Kiedy mógłby Pan starać się o status rezydenta podatkowego w UK i mieć zobowiązania jedynie wobec organów podatkowych w UK? Oraz jak legalnie prowadzić taką działalność w UK, czy trzeba ją gdzieś rejestrować i jak to wygląda? W niedzielę na PokerStars odbędzie się specjalny turniej freeroll dla członków szkoły pokera PokerStrategy. Pula nagród tego darmowego turnieju wyniesie aż $10 000. Udział w nim może wziąć każda osoba, która choć raz dokonała depozytu… Pierwsze naziemne kasyno w Polsce powstało w 1920 roku w Sopocie. Początkowo było dość skromne, gdyż można było w nim zagrać tylko w Ruletkę. Następnie wprowadzono Bakarata. Po kilku latach goście zjeżdżali tu z różnych zakątków kraju i świata aby pograć również w Blackjacka i Pokera. Profity z prowadzenia kasyna oraz liczba miłośników hazardu, którzy regularnie przybywali do kurortu sprawiły, że w 1927 roku powstał ekskluzywny Grand Hotel.

tamoxifen cancer nolvadex only pct

https://paxlovid.best/# paxlovid ingredients list

https://paxlovid.best/# antiviral covid

purchase azithromycin without prescription zithromax 500mg pills oral prednisolone

women in viagra commercials viagra prescription online

azithromycin 250mg usa prednisolone 40mg drug prednisolone 40mg price

https://paxlovid.best/# paxlovid canada

buy prescription drugs from india ed meds online without prescription or membership

https://paxlovid.best/# covid antivirals

https://molnupiravir.life/# molnupiravir

does tamoxifen make you tired tamoxifen bone pain

https://tamoxifen.best/# tamoxifen therapy

doxycycline 100mg us baricitinib 2mg us ivermectin 12 mg

https://paxlovid.best/# paxlovid deutschland

do you need prescription for viagra what exactly does viagra do?

https://withoutdoctorprescription.xyz/# meds online without doctor prescription

cost lopressor 50mg medrol 16mg online levitra 20mg brand

tab molnupiravir price molnupiravir in bangladesh

https://viagracanada.xyz/# what happens if a female takes viagra

cost metoprolol order clomid without prescription vardenafil online

ivermectin dosage human ivermectin and coronavirus

https://stromectol1st.com/# tab ivermectin 12 mg

ivermectin fleet farm does ivermectin kill lice

https://stromectol1st.com/# ivermectin to treat covid

stromectol for scabies merck stromectol brand

order albuterol 4mg pill aristocort pills aristocort 10mg pill

order albuterol online cheap triamcinolone cheap triamcinolone 10mg us

ivermectin 400 mg stromectol cream

© 2022 CTS All Rights Reserved. Privacy Policy Think about it: most of the marijuana strains at dispensaries these days are high in THC and low in CBD. If two strains have 17 percent THC and 0 percent CBD, it must be something else that’s responsible for one gluing you to the couch and the other stimulating your mind. It is other phytochemicals found in cannabis plants that are to thank for these difference, including minor cannabinoids and another category of phytochemicals called terpenes. This so-called “entourage effect” refers to this scrum of compounds supposedly working in concert to create what Chris Emerson describes as “the sum of all the parts that leads to the magic or power of cannabis.” Emerson is a trained chemist and the co-founder of a designer marijuana vaporizer products company called Level Blends. Product designers like him believe they can create THC vaping mixtures tuned with different concentrations of each terpene and cannabinoid for specialized effects. https://johnnyoesh219864.p2blogs.com/14507905/medical-marijuana-dispensary-british-columbia “We are worried that 2022 could be a year of retail closures in Ontario,” Chen said. “Unless more municipalities opt-in for cannabis stores, this could lead to a (year-over-year) decline in industry sales.” Before submitting an application to the Federal government, the person that intends to submit the application must provide a written notice to the local government, police, and fire in the area in which the site referred to in the application is located. The notice must be addressed to a senior official of the local authority to which it is provided. In Oakville, it is the Chief Building Official so that the application can be reviewed for zoning compliance to determine what the primary use is in accordance with existing use definitions such as Agriculture or Manufacturing, and the applicable zoning permissions.

ivermectin argentina ivermectin for ear mites

https://stromectol1st.com/# ivermectin label

ivermectin 1 dosage for dogs ivermectin price in india

https://stromectol1st.com/# ivermectin breast cancer

https://stromectol1st.com/# what is stromectol

buy acyclovir without prescription order zyloprim without prescription buy aceon 4mg without prescription

buy prescription drugs buy prescription drugs without doctor

https://drugsfromcanada.icu/# prescription drugs online without

https://24hr-pharmacy.top/# canadian pharmacies not requiring prescription

buy prescription drugs from canada cheap cheap pet meds without vet prescription

zovirax order online perindopril order online buy perindopril for sale

order allegra 180mg online cheap fexofenadine online buy panadol 500mg over the counter

https://ed-pills.xyz/# erectile dysfunction pills

buy doxycycline over the counter uk doxycycline cheap

where to buy clomid canada clomid 5 mg tablet

https://clomid.pro/# buy clomiphene online

ed meds online without doctor prescription canadian drug prices

https://doxycycline.pro/# doxycycline over the counter uk

prescription drugs canadian pharmacy online

clomid 400mg generic clomid for sale

https://doxycycline.pro/# doxycycline for dogs

cheap erectile dysfunction pills online ed medications

buy fexofenadine online phenytoin 100 mg canada paracetamol 500mg for sale

mexican pharmacy without prescription prescription without a doctor’s prescription

best ed pills non prescription pain meds online without doctor prescription

trileptal pills ursodiol cheap rosuvastatin 10mg price

https://24hr-pharmacy.top/# best canadian online pharmacies

buy prescription drugs from canada legal to buy prescription drugs from canada

doxycycline order online uk where to buy doxycycline in singapore

https://clomid.pro/# clomid 50 mg buy uk

doxycycline price 53 Zinc is also inexpensive and has shown cost- effectiveness in developing countries, but is associated with episodes of vomiting, which is a cause for future research.

Science shows that what really works to clear acne is gentle skin care. doxycline chicago Some patients can present with bone marrow failure symptoms and low blood counts but on examination of the bone marrow large numbers of blast cells are found, confirming a diagnosis of acute leukemia.

oral trileptal order urso 300mg without prescription rosuvastatin brand

https://24hr-pharmacy.top/# prescription drugs from canada online

doxycycline 200 doxycycline 3626

purchase zetia oral motilium order baclofen 25mg online

bactrim and sepra without a presription amoxicillin 500mg no prescription

https://erectionpills.top/# top rated ed pills

canadian pharmacy coupon code prescription free canadian pharmacy

https://canadianpharmacy.best/# canadian pharmacies that deliver to the us

canadian pharmacy levitra canada ed drugs

Bonuses in the poker world work a little differently to other gambling products. For casino, bingo and sports you normally get to wager with your bonus either straight away or after a relatively easy trigger has been met (such as betting your deposit once), but with poker the bonus is released as you play. So if a poker room is offering a 50% bonus up to $200, they are offering to give you 50% of the amount you deposit free as an extra bonus up to a maximum of $200. Therefore by making a deposit of $400 you will receive the maximum bonus available (50% of $400 = $200). As your abilities grow, you’ll be drawn to fast-paced real money poker games, which are offered at the best online poker sites. Sit&Go Tournaments are perfect for this. These tournaments may be played with two players at a time, with the pace of play set to standard, turbo, or super-turbo. The buy-in might be as little as ten dollars or as much as a thousand dollars. When all of the seats are occupied, the event starts, and the blind increases every 4 minutes. Blast is a unique playing option with a timer that we propose for a Super Turbo Texas Hold’Em Sit & Go tournament. When the blast counter reaches 0, all players go all-in until a winner is selected. Many of our top Canada poker sites offer this sort of event. https://felixbpds653208.azzablog.com/13504302/wild-joker-bonus-codes Casino T&C apply. Casino bosses know the importance of treating their players “right.” That is why land-based casinos have comps and other perks. Rich Casino has the same philosophy, offering you tons of bonuses and lucrative prizes on one condition – you play often at the casino. Australia’s Rich Casino is the best place to gamble from your mobile phone or pad. The licensed platform has been operating since 2009 and provides players with bright emotions and big wins on 1,500 machines. Most of the gaming machines developed by top providers are available in the mobile version. Here you can get pleasant bonuses for playing from your smartphone, which will increase your chances of winning. We’ll talk about this and more in our review Rich Casino mobile. If you are a Slots fanatic then Rich Casino is the place to be! This online casino hosts exciting Slots tourney on a regular basis. In order to take part, simply make the required minimum deposit and spin the reels of eligible Slots games. After that, the more you play, the higher are your chances to rank somewhere on the leaderboard to take home a share of humongous prize pools. Sunday Storm, Thermal Thursday and Sunday Super Reel are a few examples of Rich Casino Slots Tournaments.

buy tizanidine buy generic tizanidine cialis otc

order tizanidine 2mg generic tizanidine drug buy cialis 10mg

bactrim antibiotic online prescriptions cost of amoxicillin prescription

order lipitor pill order atenolol 50mg generic pregabalin cheap

cost atorvastatin 80mg atorvastatin 10mg oral buy lyrica 150mg generic

mail order prescription drugs from canada rx pharmacy coupons

https://erectionpills.top/# buy erection pills

canadianpharmacyworld com canadian online pharmacy cialis

https://antibioticwithoutpresription.shop/# buy zithromax no prescription

cheap antibiotic bactrim antibiotic online prescriptions

legit canadian online pharmacy legitimate canadian mail order pharmacy

https://canadianpharmacy.best/# canadian pharmacies that deliver to the us

cheapest prescription pharmacy no prescription needed pharmacy

zithromax for sale cheap buy cheap zithromax online

desloratadine for sale online asacol sale mesalamine pill

desloratadine 5mg for sale amaryl order online order mesalamine 400mg pills

what is the best ed pill medications for ed

https://antibioticwithoutpresription.shop/# buy generic zithromax no prescription

erection pills viagra online treatment for ed

https://erectionpills.top/# best ed medication

no prescription needed pharmacy canada drugs coupon code

cheapest pharmacy to get prescriptions filled best no prescription pharmacy

https://canadianpharmacy.best/# vipps canadian pharmacy

cheapest ed pills best medication for ed

cheap irbesartan 150mg pepcid 20mg oral oral famotidine 20mg

avapro 300mg cost clobetasol drug order pepcid 40mg online

canadian pharmacy online reliable canadian pharmacy reviews

bactrim no prescription can we buy amoxcillin 500mg on ebay without prescription

https://pharmacywithoutprescription.best/# uk pharmacy no prescription

how much is amoxicillin prescription zithromax 500 without prescription

https://canadianpharmacy.best/# canadian pharmacy viagra

reputable canadian pharmacy canadian pharmacy online cialis

buy tacrolimus buy prograf 5mg sale fenofibrate 200mg canada

tacrolimus 1mg drug buy labetalol generic order tricor 200mg online

zithromax online no prescription bactrim without a prescription

https://antibioticwithoutpresription.shop/# buy doxycycline online without prescription

ed pills gnc online ed medications

Hello ! I am the one who writes posts on these topics casino online I would like to write an article based on your article. When can I ask for a review?

canadian pharmacy tampa canadian pharmacy online reviews

purchase colchicine buy plavix 75mg without prescription clopidogrel 75mg pill

cheap colchicine clopidogrel ca buy clopidogrel for sale

canadian pharmacy no prescription needed canada pharmacy coupon

https://canadianpharmacy.best/# canadian drugs pharmacy

ed drugs list ed medications

https://antibioticwithoutpresription.shop/# buy zithromax without prescription online

ed pills for sale treatment for ed

buy cheap doxycycline amoxicillin 500 mg purchase without prescription

https://canadianpharmacy.best/# is canadian pharmacy legit

zithromax buy online no prescription zithromax prescription in canada

order altace generic buy cordarone pill order coreg 25mg generic

altace 10mg ca buy buspar 5mg online cheap buy coreg 6.25mg online cheap

cheap antibiotic bactrim without a prescription

https://antibioticwithoutpresription.shop/# buy doxycycline online without prescription

where to buy amoxicillin 500mg without prescription amoxicillin without prescription

https://antibioticwithoutpresription.shop/# buy cheap amoxicillin online

cheapest pharmacy to fill prescriptions with insurance uk pharmacy no prescription

sildenafil tablets 100mg cost sildenafil 105 mg canada

https://doxycyclinemonohydrate.icu/# where to purchase doxycycline

buy doxycycline cheap where to purchase doxycycline

where to purchase doxycycline generic doxycycline

https://buytadalafil.icu/# buy tadalafil cialis

buy oxybutynin order ditropan 2.5mg sale nitrofurantoin without prescription

ditropan online nitrofurantoin 100 mg oral furadantin 100 mg pills

sildenafil canada over the counter sildenafil 50mg tablets uk

what is minocycline prescribed for ivermectin 5 mg price

https://buytadalafil.icu/# canadian pharmacy generic tadalafil

generic for doxycycline doxycycline pills

order ibuprofen 400mg generic buy mirtazapine sale buy calcitriol 0.25mg without prescription

tadalafil 5 mg tablet coupon tadalafil 20 mg price canada

https://buysildenafil.best/# sildenafil 5 mg price

sildenafil otc canada cheap price sildenafil 100 mg

motrin for sale paxil 10mg canada rocaltrol 0.25mg cheap

buy doxycycline for dogs buy doxycycline 100mg

https://buytadalafil.icu/# where can i get tadalafil

buy cheap doxycycline online where to get doxycycline

zyban canada cyclobenzaprine order online order viagra 50mg

generic for doxycycline buy doxycycline monohydrate

https://pillswithoutprescription.xyz/# canadian drug

minocycline cost buy ivermectin for humans uk

buy bupropion without prescription purchase cyclobenzaprine sale viagra 50mg without prescription

sildenafil 200mg price sildenafil drug

https://buysildenafil.best/# sildenafil 100mg tablets uk

tadalafil online india tadalafil online without prescription

п»їwhere to buy stromectol online cost of ivermectin lotion

https://buysildenafil.best/# 100mg sildenafil 30 tablets price

order methotrexate online buy coumadin pill buy cozaar

buy methotrexate pills order generic coumadin cozaar 25mg uk

Thanks very interesting blog!

https://comprarcialis5mg.org/it/

http://photographybycynthiablog.com/2017/05/lifestyle-session-tips/

cialis generico https://comprarcialis5mg.org/it/

Beim Ramses Book sind wir in einer der alten Grabstätten, welche im Schein der Fackeln orange und golden strahlen. Das Logo ist wie üblich über dem Spielfeld angebracht. Das macht bei dieser Art Slot auch durchaus Sinn, denn es gibt gefühlte hunderte Bücher- und Ägyptenslots. Mit der richtigen Ramses Book Strategie ist es kein Problem, viele Stunden lang großen Spaß an diesem tollen Slot zu haben. Da die Ramses Book Gewinnchance recht hoch ist, sollte am Ende auch ein stattlicher Gewinn winken. (Match Bonus zu 100 %) Entwickler Bally Wulff hat das Spielfeld ins tiefe Innere einer Pyramide verlegt. Hier geht es online kostenlos auf Schatzsuche. Man wird dabei auf einige themenbasierte Symbole treffen, welche die Geschichte Ägyptens weiter ans Licht bringen. Darunter fallen neben dem Pharao höchstpersönlich auch der Adler-ähnliche Gott Horus, die berühmten Sphinx-Katzen und eine alte Säule mit geschichtsreichen Gravierungen. Die Spannung steigt bei Ramses Book online, sobald die Walzen nach einer Drehung zum Stillstand kommen. Dieser Moment wird von filmreifer Musik begleitet. Treffen Sie beim Ramses Book Spielen eine Gewinnkombination, erwachen die beteiligten Symbole zu Leben und intensivieren das Erlebnis. https://ikatanalumniistn.com/community/profile/raefrantz510044/ EuroMillionen Systemscheine .. Bei der europäischen Lotterie “Euromillionen” hat am 28. April 2006 erstmals ein in Österreich abgegebener Wettschein bei „5 plus 2 Richtige“ im ersten Rang gewonnen. Die Besonderheit dabei war, dass auf dem in Wien gespielten Schein die richtigen Zahlen gleich zwei Mal vertreten waren (Gesamtgewinn 13 Millionen Euro). Bei den EuroMillionen gibt es verschiedene Systeme, die sich durch die Anzahl der Zahlen unterscheiden, die man ankreuzen kann. Wie kann sich Lottoland das alles leisten? Wählen sie ihre fünf zahlen (1-50) und zwei sterne (1-12) 01.01.2020 19:30 In Deutschland betragen die Spieleinsätze momentan (2006) rund fünf Milliarden Euro pro Jahr. Sie werden wie folgt verwendet: Bei einem Systemtipp werden auf dem Tippfeld mehr als die eigentlichen 5 aus 50 Zahlen und oder 2 aus 12 ausgewählt. Damit tippen Sie dann auf alle möglichen Zahlenkombinationen für die möglichen 7 Richtigen Zahlen. Da Sie hier auf deutlich mehr Zahlenreihen gleichzeitig tippen, kann die Gebühr für den Tippschein auch deutlich teurer werden.

cephalexin 125mg over the counter cost erythromycin 250mg erythromycin 250mg brand

cephalexin 250mg pills order erythromycin generic buy erythromycin pills

buy generic fildena 50mg budesonide order purchase rhinocort pill

fildena 50mg tablet nolvadex online budesonide allergy spray

https://datingtopreview.com/# sex dating free

buy generic cefuroxime 250mg bimatoprost online buy buy methocarbamol 500mg generic

order cefuroxime sale ceftin cost robaxin brand

order desyrel 50mg pills buy desyrel 50mg pfizer viagra 50mg

order desyrel 50mg pill desyrel medication sildenafil 100mg sale

tadalafil for sale online tadalafil tablets 20 tadalafil sales

atorvastatin muscle pain atorvastatin 20 mg side effect can you take atorvastatin in the morning?

cost cialis low cost ed pills cialis 10mg usa

cialis pills 5mg ed pills online buy tadalafil 10mg online cheap

how to get neurontin cheap how much is generic neurontin how much neurontin is safe

viagra 1 tablet price cheap viagra fast delivery viagra price in mexico

where to buy cialis online for cheap cialis discount coupons cialis store in qatar

how much is viagra in mexico cheap sildenafil online canada viagra online fast delivery

Urispas canadian pharmacy chains pharmacy website

online free dating sites sex dating site

oral glucophage 1000mg oral metformin norvasc pills

order generic metformin 500mg atorvastatin pills norvasc 10mg canada

https://datingtopreview.online/# singles near me free

sildenafil 150mg tablets viagra where to buy over the counter sildenafil discount

https://datingtopreview.com/# dating free site online

D, Additional CRISPRa target genes tested in neurons demonstrated leaky induction in the absence of Cre recombinase two way ANOVA with n 3 per group for Gadd45b F 1, 12 91 lasix for hypertension

dating sjtes best free online dating sites

https://datingtopreview.com/# bbw dating sites

tadalafil citrate liquid tadalafil patent expiration cialis tadalafil 10 mg

https://datingtopreview.online/# mature dating

lisinopril online order atenolol 50mg pill tenormin brand

order lisinopril 2.5mg sale order generic lisinopril 5mg buy tenormin 50mg pills

https://datingtopreview.com/# date women free

dating service hotmail south africa best dating site online

totally free dating sites near nelson find online dating

https://datingtopreview.com/# local dating sites

purchase tadalafil cialis free sample cialis prices

canadian pharmacy oxycodone canadian pharmacy sildenafil

subtle asian dating westminster dating app the world

https://withoutprescription.shop/# canadian pharmacy ship to us

https://pharmacyreview.best/# www canadianonlinepharmacy

viagra online canada no prescription sildenafil 100 no prescription viagra price mexico

viagra cialis from germany order tadalafil 20mg what is the difference between viagra and cialis

https://safedrg.com/# reputable canadian pharmacy

canadian discount pharmacy canadian online pharmacy no prescription

Tadalis SX canadian discount pharmacy online london drugs surrey bc canada

prescription drug prices comparison reputable canadian pharmacy

order cialis 5mg online cheap buy cialis 5mg for sale sildenafil 150mg sale

oral tadalafil cialis generic name purchase sildenafil without prescription

foreign online pharmacy canadian drugs online pharmacy

https://pillfast24.online/# canadian neighborhood pharmacy

fusion rx compounding pharmacy non prescription online pharmacy reviews Epivir-HBV

aarp recommended canadian pharmacies canadian drug store

where to buy generic viagra over the counter viagra men otc viagra online

Regards, Quite a lot of information!

penalty for mailing prescription drugs best online drugstore pharmacy online coupon

online pharmacy no prescription needed online pharmacy no prescription

https://cheapestpharmacy.store/# canada online pharmacy no prescription

legit non prescription pharmacies pharmacy online 365 discount code

You mentioned it terrifically!

CYP 2D6 is also known as debrisoquine hydroxylase that catalyzes the oxidation of approximately a quarter of all the commonly therapeutic drugs in used clinical practice today priligy buy online usa 3 of 1926 women or a BMI of less than 25 kg m 592 31

buy generic viagra online uk otc viagra usa viagra 50mg online in india

no prescription required pharmacy cheapest pharmacy for prescriptions without insurance

canadian pharmacies no prescription us online pharmacy

https://noprescription.store/# best canadian pharmacies

personals online cdff dating site login

https://cheapestpharmacy.store/# pharmacy no prescription required

sildenafil pills uk cheapest price for sildenafil 100 mg best generic viagra in india

canadian pharmacy store canadapharmacyonline com

https://bestadultdating.fun/# date online free site

fdating international date me site

ivermectin and collies stromectol covid 19

tadalafil cialis generic cialis tadalafil uk

cost doxycycline cheapest doxycycline tablets

order sildenafil no prescription cheap sildenafil 50mg

https://buyamoxil.site/# amoxicillin 250 mg capsule

Thanks a lot. I appreciate this!

cheap meds no prescription buy viagra from canadian pharmacy canadian pharmacy viagra generic

Many thanks. Wonderful information!

us online pharmacy bringing prescription drugs into japan canadian health pharmacy

viagra pills over the counter canada can i order viagra online online generic viagra india

https://clomidforsale.site/# clomid price

Kudos. I appreciate this.

mail order drugs from canada legal ed pills that work quickly wegmans online pharmacy

buy clomiphene online clomid price

how to buy amoxicillin online amoxicillin 500 mg price

https://clomidforsale.site/# clomiphene generic

tadalafil 20 mg mexico tadalafil cost india

clomid medication generic clomiphene

Really tons of superb info!

cheapest pharmacy to fill prescriptions with insurance medication online legit canadian pharmacy sites

how to get amoxicillin amoxicillin 500 mg cost

https://nonprescriptiontadalafil.site/# tadalafil 20 mg buy online

sildenafil 10 mg price sildenafil citrate over the counter

Terrific information, Regards!

rx plus pharmacy sanford best online pharmacy for adderall doctor of pharmacy degree online

Awesome content. Regards!

valium online pharmacy benzodiazepines online pharmacy aarp recommended canadian pharmacies

tadalafil best price 20 mg buy cialis with prescription australia where to buy cialis

Thanks. I enjoy this.

canada pharmacies without script family mart store online pharmacy addiction to prescription drugs

You made your stand pretty well!.

cvs pharmacy store hours on sunday cheap drugs online walmart pharmacy online login

Thanks! Very good stuff!

best online mexican pharmacy generic cialis canada online pharmacy cheapest prescription pharmacy

Tips certainly taken.!

canadian prescription drugs rx software pharmacy canadian pharmacy coupon code

cialis strength cialis samples free how much is cialis in canada

Many thanks, I enjoy this.

canadian pharmacies compare planet drugs canada cheapest canadian pharmacy for viagra

buy prednisone 20mg without a prescription best price can you buy prednisone over the counter in canada

how much is sildenafil 25 mg cost generic viagra viagra pills australia

single women dating fdating 100 free dating site free

With thanks! Fantastic information!

pharmacy wholesalers canada what are the best online canadian pharmacies prescription drug discounts

Truly plenty of fantastic information!

best canadian pharmacies for cialis steroids online pharmacy canadian pharcharmy online fda approved

Cyclosporin A, another P gp antagonist, also enhanced C6 ceramide cytotoxicity lasix adverse effects

viagra gel uk generic viagra cheap canada viagra pills australia

With thanks! I appreciate it.

online pharmacy greece nps online pharmacy reviews list of safe online canadian pharmacies

Great information. Many thanks.

legal canadian prescription drugs online rx relief pharmacy discount cards cheap canadian pharmacies online

Superb knowledge. Kudos!

domperidone from canadian pharmacy reviews of canadian pharmacies quality prescription drugs

Regards, I like it.

rx crossroads pharmacy canadian online pharmacy calls canada pharmaceuticals online generic

buy prednisone tablets uk prednisone 10mg

https://ed-pills.site/# best male ed pills

thecanadianpharmacy canadian pharmacy levitra

when cialis patent expires buy low dose cialis online cialis recreational use

https://canadiandrugs.site/# legitimate canadian mail order pharmacy

order viagra canadian pharmacy cheap discount viagra sildenafil otc europe

Thanks a lot, Quite a lot of knowledge!

best australian online pharmacy web pharmacy walmart online pharmacy medical expense report

Really quite a lot of wonderful facts!

canadian medication canadian pharmacy chains pharmacy drugs store

azithromycin lloyds pharmacy arcoxia online pharmacy walmart pharmacy diflucan

https://topdatingsites.fun/# free app for online meeting

canadian pharmacy cialis 40 mg canadian online pharmacy cialis